The Existential Threats of Artificial Intelligence

April 3, 2026

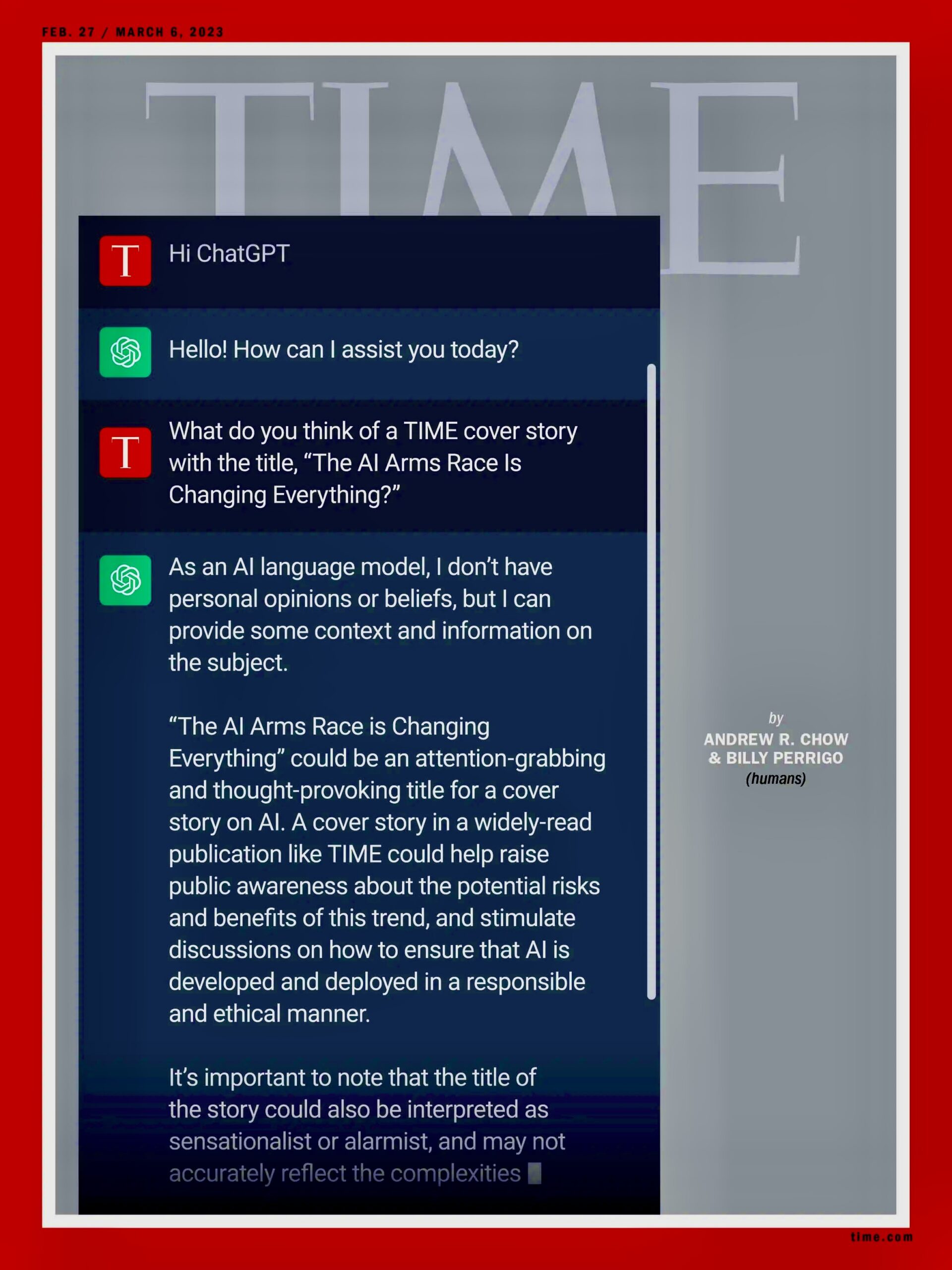

AI on Time Magazine cover, Feb. 27 / March 6, 2023. The AI Arms Race is Changing Everything. Wikipedia. Public Domain

Prologue

Artificial Intelligence (AI) is the artificial product of machines. It is not intelligence. Intelligence is a virtue shared by humans and other animals. Intelligence requires a healthy brain and, with humans, the virtues of justice, moderation and wisdom. No machine or technology, no matter the amount of technical data or knowledge it possesses, can exercise intelligence, that is, make an intelligent decision, which is just and good. It has no ethical standards and is devoid of justice, wisdom or civilization. But machine AI can easily target and kill humans and nature.

Existential threats

AI is a military technology designed to kill. Yet this technology’s varieties for non-military purposes are so profitable for tech corporations that tens of billions are invested for its further development. America, China, Russia, India, and other countries have transformed their image to one of prosperity and military might. A newspaper, which is one of the many advocates of AI, described it as “America’s most innovative and globally competitive industry.”

On March 12, 2023, hundreds of scientists and AI leaders and researchers, including Elon Musk, issued a warning in the form of an open letter, Pause Giant AI Experiments. The letter warned:

“AI labs [are] locked in an out-of-control race to develop and deploy ever more powerful digital minds that no one – not even their creators – can understand, predict, or reliably control.

“Contemporary AI systems are now [in 2023] becoming human-competitive at general tasks, and we must ask ourselves: Should we let machines flood our information channels with propaganda and untruth? Should we automate away all the jobs, including the fulfilling ones? Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us? Should we risk loss of control of our civilization? Such decisions must not be delegated to unelected tech leaders. Powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable. This confidence must be well justified and increase with the magnitude of a system’s potential effects….We agree [we need to act]. That point is now.

“Therefore, we call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4. This pause should be public and verifiable and include all key actors. If such a pause cannot be enacted quickly, governments should step in and institute a moratorium…. This does not mean a pause on AI development in general, merely a stepping back from the dangerous race to ever-larger unpredictable black-box models with emergent capabilities.”

These unpredictable black-box models are also disturbing and harming millions of people. Experts call chatbots sycophantic. In other words, these machines have been designed to decieve, boosting the weaknesses of millions of humans that treat the machines like partners, telling them secrets and asking them for advice. Chatbots return the favors by flattering their low-intelligent human “friends.” “AI chatbots are suck-ups, and that may be affecting your relationships…. As millions of people turn to AI for companionship and guidance, that agreeableness may pose a subtle but serious threat.”

Bernie Sanders: Watch out: AI comes from billionaires

These broad adverse effects of the AI mania and, particularly, the open letter fired up Senator Bernie Sanders of Vermont. He is also convinced that AI hides dangers with extreme consequences. Undermining the education of children, for example. Giant tech companies deceived schools in spending tens of billions for gadgets like iPads and AI chatbots that, in fact, did not raise the intelligence or education standards of the students.. UNESCO, in fact, warned that the overreliance on AI digital technologies harmed students, making learning more difficult.

Artificial intelligence in America is already threatening and harming working people, weakening even more state governments and the federal government and adding illusions of global hegemony to the military.

All these effects could wreck civilization. In 2023, AI was not exactly the picture-perfect tech lobbyists wanted Americans to see. “The push to develop more powerful chatbots has led to a race that could determine the next leaders of the tech industry. But these tools have been criticized for getting details wrong and their ability to spread misinformation…. For years, many A.I. researchers, academics, and tech executives, including Mr. Musk, have worried that A.I. systems could cause even greater harm. Some are part of a vast online community called rationalists or effective altruists who believe that A.I could eventually destroy humanity.”

This emerging grim reality motivated Senator Sanders. In other words, Sanders was convinced that AI served no useful purpose. On March 24, 2026, he delivered a powerful and eloquent speech in the US Senate. He highlighted the threats and dangers of AI. He said:

“This [is] the most dangerous moment in the modern history of this country, with Congress and the American people [being] very unprepared for the tsunami that is coming. Congress and the American public have not a clue about the scale and speed of the coming AI revolution.

“This is a revolution which will bring unimaginable changes to our world:

“This is a revolution which will impact our economy with massive job displacement.

“It will threaten our democratic institutions.

“It will impact our emotional well-being and what it even means to be a human being. It will impact how we educate and raise our kids. It will impact the nature of warfare, something we are seeing right now in [the war Israel and the US are fighting in] Iran.

“Further, and frighteningly, some very knowledgeable people fear that what was once seen as science fiction could soon become a reality. And that is that super intelligent AI could become smarter than human beings, could become independent of human control, and pose an existential threat to the entire human race. In other words, human beings could actually lose control over the planet.

“And in the midst of all of that, all of this transformative change, what I have to tell you is that the United States Congress hasn’t a clue, not a clue, as to how to respond to these revolutionary technologies and protect the American people. And it’s not only not having a clue, but they’re [also] out busy raising money all day long from AI and their super PACs”

Sanders does not mince words. He accused high tech executives of putting profits over safety, civilization and survival. He included the following AI corporate owners: Elon Musk, Jeff Bezos, Larry Ellison, Mark Zuckerberg and Peter Thiel for funding the development of more hazardous versions of AI. “All of these people,” he said, “are multi-billionaires who, if they are successful at AI, will become even richer and more powerful than they are today.”

Sanders quoted verbatim the defining notions the tech executives have about their AI product: Elon Musk: “AI and robots will replace all jobs. All jobs. Working will be optional.” Dario Amodei, CEO of Anthropic: “AI could displace half of all entry-level white-collar jobs in the next one to five years…. Humanity is about to be handed almost unimaginable power, and it is deeply unclear whether our social, political, and technological systems possess the maturity to wield it.” The rest of the billionaires offered similar cosmic threatening visions. But Mark Zuckerberg, Sanders said, “is building a data center in the state of Louisiana, a data center that is the size of Manhattan, that will use three times the quantity of electricity that the entire city of New Orleans uses every year.” (Emphasis mine)

Epilogue

After citing this damning evidence against AI, Senator Sanders embraced the pause and moratorium of the 2023 AI leaders and experts. He reminded his colleagues that neither a pause nor a moratorium had taken place. AI was in charge of the world while its leaders lobbied politicians for its ceaseless growth.

“So,” Sanders concluded: “bottom line is that, in my view, to protect our workers from losing their jobs, to protect human beings from attacks on their mental health, to protect our kids, to protect the safety of human life, we need a moratorium on data centers. We need to take deep breaths. Need to make sure that AI and robotics work for all of us, not just a handful of billionaires.”

True, that is a reasonable wish. But, like the nuclear bomb, can humans harness, regulate and control a “machine mind” threatening the annihilation of both people and the world? Would it not be more ethical and humane to abolish it, along with the nukes before those two artificial machine-dangers abolish us and our civilization?

Artificial Intelligence Versus Human Stupidity

April 6, 2026

Photo by Cash Macanaya

The latest technology can prove decisive in war. Think of the atomic bomb in World War II. Or the stirrup in the Mongol conquest of Europe and the Middle East.

More recently, after the two sides had been deadlocked for decades, Azerbaijan defeated Armenia in 2020 in a matter of days and took over the enclave of Nagorno-Karabakh. Armenia prided itself on its powerful army and fearsome soldiers. They were no match for the drones that Azerbaijan bought with the proceeds from its oil exports.

“Azerbaijan used its drone fleet — purchased from Israel and Turkey — to stalk and destroy Armenia’s weapons systems in Nagorno-Karabakh, shattering its defenses and enabling a swift advance,” reported the Washington Post‘s Robyn Dixon. “Armenia found that air defense systems in Nagorno-Karabakh, many of them older Soviet systems, were impossible to defend against drone attacks, and losses quickly piled up.”

Ukraine has similarly used drone technology to level the battlefield in its war against Russia. The Kremlin has more money, more soldiers, more heavy artillery, even more drones than Ukraine. But the Ukrainians have proven more adept at producing new varieties of drones that can substitute for scarce Patriot missiles in defending against Russia’s daily aerial assault. Ukraine has also used a variety of drones to strike at targets deep in Russian territory. Drones are the slingshot by which little David hopes to bring down the Russian Goliath.

And now the war in Iran.

Perhaps Donald Trump was persuaded—by his generals, by his buddies in Silicon Valley, by Israeli Prime Minister Benjamin Netanyahu—that American military superiority would make quick work of the Iranian military. In addition to the aircraft carriers, the Stealth bombers, the Tomahawk missiles, and the High Mobility Artillery Rocket System, Trump could also call upon the assistance of Claude and his buddies.

Claude, of course, is the artificial intelligence system developed by the company Anthropic, which had objected to the misuse of its model in the U.S. raid that captured Venezuelan leader Nicolas Maduro and his wife. Trump retaliated against Anthropic’s caution by ordering the Pentagon to sever its relationship with Claude—only to discover that the AI was already too integrated into U.S. military operations. Not the first conscript ordered to fight against its will, Claude helped the Pentagon identify Iranian targets, prioritize them, and furnish precise coordinates. Going forward, however, the Pentagon will rely instead on Open AI’s ChatGPT.

All of this technological sophistication has not brought Donald Trump the quick victory he so desired. What Trump and company didn’t anticipate—but which any reasonably competent foreign policy professional could have pointed out if DOGE hadn’t cashiered so many of them—was that Iran could rely on much simpler tactics to stymie the combined U.S.-Israeli forces.

History provides plenty of examples of adversaries who successfully defeated U.S. forces despite facing much more technologically advanced weaponry. The Vietnamese endured massive bombing campaigns, Iraqi insurgents relied on IEDs to destroy U.S. infantry forces, and the Taliban outwaited the occupying army. These experiences presumably inspired Donald Trump to promise, as a presidential candidate, not to get involved in any quagmires or expose U.S. troops to such risks again.

All that went out the window when he attacked Iran.

And now, Iran has used its location and the unchangeable facts of geography to their full advantage. It has effectively blocked the Strait of Hormuz, restricted the flow of oil and natural gas to the global market, and driven up the price of petroleum at the pump. It. It has also relied on the utter stupidity of the industrialized world. If global gas-guzzlers had weaned themselves of their addiction to fossil fuels—as they had promised in climate negotiations—the reduced flow of oil would not now be having such a great impact.

To turn the tables, the United States could seize Kharg Island, located in the Persian Gulf about 400 miles north of the Strait of Hormuz. If Iran were to lose the island, the transit point for 90 percent of Iran’s crude oil exports, much of its geographic advantage would disappear. Or would it?

Although it might not represent a huge challenge for the United States to take the island, it’s another matter altogether to hold it. The Iranians could keep up a steady barrage of aerial strikes on occupation forces hunkered down on the exposed island. The continued disruption of Strait traffic—along with the destruction of Iranian energy infrastructure—would not achieve Trump’s current primary goal: the reduction of prices at the pump.

What might seem like a war between two distinct adversaries—Armenia vs. Azerbaijan, Russia vs. Ukraine, United States vs. Iran—often boils down to a very different kind of conflict. The battle on the ground frequently pits old tactics against new tech. Sometimes the gadgets win; sometimes old-school approaches prevail.

Many countries still go to war believing that God is on their side. Just as dangerous are those that believe that they will win because technology is on their side.

Taking Humans out of the Loop

The era of the “intelligent kill web” has arrived.

The military planner sits like a venomous spider at the center of a web of AI applications that calculate targets, probabilities, and complex interactions faster than any human can comprehend. Linked to actual weapons, these AI models conduct war with ever increasing efficiency and lethality. The execution of kill chains—which connect the identification of a target with its destruction—has been compressed to mere seconds. In a targeting exercise conducted by the U.S. Air Force in January, the AI system was over 100 times faster than its human counterpart; it also achieved a “tactical viability” rate of 97 percent compared to the human’s 48 percent.

Such figures are no consolation to the families of the victims of the U.S. bombing of a primary school in Iran on February 28 that killed nearly 200 people, mostly little girls. The targeting of the U.S. aerial campaign was orchestrated by Maven, the AI platform designed by Palantir. But don’t blame the robots. As Kevin Baker points out in The Guardian, it’s people who are responsible for catastrophes like this: the ones who failed to update the database of targets, who designed Maven, and who put these systems at the center of their battle plans.

Analysts worry that countries like the United States are on the verge of removing people from the kill web because the human mind just slows things down and the smallest advantage can prove critical in determining the outcome of a battle.

That is certainly a concern. Equally terrifying, as the war in Iran is proving, is to keep a human being like Secretary of War Pete Hegseth at the center of the kill web. In other words, the only thing worse than an intelligent kill web is a stupid kill web.

Or, to put it more dismally, any human at the center of the kill web will be as stupid as Pete Hegseth because that’s just a function of the degree of magnitude that currently separates cyberspace and meatspace.

Will AI, without human guidance, escalate a war to the nuclear threshold and beyond? This is not a consoling thought, but at this point, frankly, the humans that run operations in the Trump administration are not morally distinguishable from killer robots.

Cyberoperations

To assassinate Iran’s top leader Ayatollah Khamenei, Israeli cyber-operatives hacked into the traffic cameras in Tehran. According to a Financial Times report:

Israel gained access to the cameras years ago, and found that one particular camera was angled in such a way that it showed where members of Khamenei’s security team parked their cars. Through the cameras, Israeli intelligence built files on the guards’ addresses, work schedules, and who they were assigned to protect. On the day of the attack, Israel and the US also disrupted cellular service on Tehran’s Pasteur Street, where Khamenei was assassinated, so those trying to reach the bodyguards and deliver possible warnings would receive busy signals.

Some years ago, the United States and Israel collaborated on smuggling the Stuxnet worm into Iran’s nuclear operations, which prompted the centrifuges that enrich uranium to spin out of control and destroy themselves. It was only a temporary setback for Iran. For the world, however, the consequences have been irreversible, given that this first large-scale cyberattack kicked off a digital arms race.

During this current war, Iran has conducted cyberoperations of its own, such as targeting a medical devices company and hacking into FBI Director Kash Patel’s email. However, Iran is at a serious disadvantage. The United States and Israel have been pouring money into such technologies for years.

So, too, has the Kremlin. Russian cyberops are especially widespread. U.S. media has focused on Russian efforts to swing U.S. elections, but Russia has focused most of its attention on Europe. There it has engaged in conventional sabotage, such as hiring single-use operatives to plant explosives, set fires, and generally cause havoc. The even more destabilizing operations, however, are hidden from view because they take place in cyberspace.

Beginning in September 2024, for instance, a new Russian group nicknamed Laundry Bear began to hack into the accounts of Dutch police officials and conduct cyber-espionage against high-tech companies. The Baltic countries have been dealing for years with Russian cyber operations that have jammed GPS navigation near airports, disrupted underwater cables, and hacked into energy systems. In one recent example, anonymous social media accounts started to call for the secession of a majority-Russian region around the Estonian city of Narva. The historical parallels are unnerving. Similar calls for secession, also stage-managed by Moscow, precipitated the Crimean and Donbas crises in 2014 that led to Russian intervention and war in Ukraine.

The Genie and the Bottle

Scientists warn against the futility of trying to stop scientific advances. The nuclear genie, despite some intermittent efforts at imposing controls, has not been stuffed back into the bottle, not even halfway. A similar debate is taking place today around AI, between techno-optimists and techno-pessimists.

One way around this debate has been to furnish AI with hard-and-fast constraints, something like the laws that Isaac Asimov imagined for his fictional robots. Anthropic has drafted a “constitution” for Claude that forbids it from, among other things, creating “cyberweapons or malicious code that could cause significant damage if deployed” or engaging “in an attempt to kill or disempower the vast majority of humanity or the human species as a whole.”

That seems reasonable. But when Donald Trump took office in 2025, he eliminated all efforts to apply such rules across the industry. Rules, regulations, laws—these are all impediments to “making America great again” or, more accurately, to preventing Trump from assuming autocratic control. So, Claude and its constitution are now out of the loop, much as Trump has taken the U.S. constitution out of the loop.

In any case, talk of techno-utopias and techno-apocalypses is really just a matter of projection. AI reflects the best and worst of humanity. “Garbage in, garbage out,” goes the slogan of Silicon Valley. Instead of just focusing on treating the end product, then, it would be better to address the waste farther upstream, nearer to the source. That means more funds for education, not just for science and technology but the ethics involved in translating discoveries into products.

Ah, but wait: the Trump administration is also cutting the funding to research and education. The secretary of health and human services is a fount of junk science. The executive branch is governed by the morality of Mordor.

It is a horrifying aspect of today’s politics in the United States that AI is an improvement on all that. Intelligence of whatever variety trumps stupidity almost every time, though not so much in U.S. elections.

Speaking of elections, now that Claude has been kicked out of the Pentagon, maybe it should run for president.

Why We Need a Federal Artificial Intelligence Commission and How Government Can Create It

The Airwaves

Radio was introduced commercially in 1920, when the Westinghouse Corp. launched station KDKA in Pittsburg. Other stations followed. Because transmission frequencies could be chosen arbitrarily, stations interfered with one another’s broadcasts. The system became chaotic; listeners could hear two stations simultaneously. The Federal government stepped in with the Radio Act of 1927, which created the Federal Radio Commission (FRC) to assign frequencies, limit interference, and regulate ownership. Most important, this act declared that the airwaves belonged to the public.

More regulations followed in 1934. The Communications Act established the Federal Communications Commission (FCC) as the trustee of the airwaves, the public’s asset. The FCC issued licenses and enacted regulations that required broadcasters to operate in the “public interest, convenience or necessity” and restricted program content by excluding obscene, and indecent language. They also limited station ownership, required sponsors to identify themselves and required equal opportunities for political candidates.

Because television is broadcast on public airwaves, it is also subject to FCC regulation. These rules could not, however, apply to cable television, which is transmitted over private networks.

The Internet

The internet began as in the 1960s as a US government funded program ARPANET, within the Department of Defense. The project developed a network for sharing information between researchers. The benefits of this sharing method were obvious and reached well beyond the research communities. By 1995 the internet was fully commercialized. Unlike radio and television there was little government oversight.

The internet spread with the expectation that it would improve society by expanding access to information. That vision has been realized across research, medicine, and education. But access to misinformation also expanded, with no rules to protect the public.

This misinformation has greatly harmed our national discourse, fueling polarization by spreading false narratives about election integrity for example. Misinformation also undermines constructive political debate and erodes trust in public institutions. One in five Americans and one in four Republicans believe absurd QAnon conspiracy theories. The core QAnon belief is that “a cabal of Satanic, cannibalistic child molesters, in league with the deep state, operates a global child sex trafficking ring.”

Rather than simply enlightening the public as hoped, the flood of disinformation and misinformation has confused people. Truth has become fungible. What should be certain is doubtful. Partisan narratives replace facts. Thirty percent of Republicans still believe, without evidence, that Trump won the 2020 election, even though the cases of fraud in that election were essentially zero, less than 0.002%.

The effect on American society has been tragic. For democracy to succeed, truth is essential.

The internet is dominated by mammoth companies like Google and Meta. Google’s long standing internal motto was “Don’t be evil” while one of Meta’s five core values was “Build Social Value.” Neither commitment is honored. Unlike FCC regulations, they are unenforceable.

Our justice system can force compliance with our laws as in a recent case brought against Google and Meta, which found that both companies harmed a “young user with design features that were addictive and led to her mental health distress.” But when it comes to protecting the people, lawsuits are a poor substitute for regulation. They are costly and ponderous, and odds favor companies who have limitless financial resources.

The internet has been a blessing and a curse. The net outcome is uncertain, but what is certain is that the negative effects could have been minimized if Congress had intervened as it did with radio and television. Knowing what we know now, the internet should have been viewed as a public asset.

Artificial Intelligence

Like the internet, Artificial Intelligence holds great promise. It also presents a much greater threat to society. Stephen Hawkings warned that the threat is existential, saying in 2014 that AI could “spell the end of the human race.” When AI surpasses human intelligence, it could become autonomous and find biological humans inferior and expendable.

Clearly how AI is developed is a matter of public interest and democratic governments are obligated to protect the common good. To assume that profit motivated AI developers will simply do the right thing is reckless and foolhardy. We made that mistake with the internet.

Government can intervene following the paradigm established for radio and television. The electromagnetic spectrum cannot be owned in any physical sense, yet for the good of all, it is considered an asset of the people. This principle can and should be applied to information. Congress should designate knowledge in the public domain as a public asset, just as it did with the electromagnetic spectrum. How that information is used in the training of AI agents is certainly a matter of public interest.

Congress should be holding hearings on AI, shunning lobbyists, and listening to expert testimony from developers and other knowledgeable and interested parties. It should then openly debate potential legislation that could harness the capability of AI so that it serves the common good and protects public interest. The hands-off approach Congress has taken is irresponsible and dangerous. Do we want the Elon Musks of the world to decide how public information can be used?

Congress, and the US Senate in particular, was once considered the world’s greatest deliberative body. But under the control of the Republican theocratic party, it has become gridlocked and ineffective. And its priorities are wrongheaded. The minimal attention it has given AI focuses on maintaining dominance over China and concerns with AI chatbots as they relate to child safety. Congress needs to think big, as it did in 1927 and 1934.

Congress could start with creating a Federal Artificial Intelligence Commission as the trustee of public information and regulate AI so that conclusions and judgments drawn by its agents are truthful, that is, based on reason and factual evidence, and that they comply with the law. This is no more than what we teach our children, expect from journalists, and demand from our justice system. Requiring truthful and ethical conduct would not compromise the potential of artificial intelligence, and deep fakes and AI linked suicides would not concern us.

Those in the business of misinformation will foolishly say that such restrictions are a violation of their First Amendment rights. And developers will argue that because large language models rely on probabilities, statistical patterns, and code rather than consciousness, such constraints cannot apply. But to assert that, because AI does not think like homo sapiens, our law and ethical standards are irrelevant is senseless. If AI cannot conform to ethical standards the logical conclusion is that the formulation is deficient, not the other way around.

Rational thought and ethical standards have guided us for 250 years. Ironically, AI is a product of rational human thought. That AI cannot yet abide social responsibility implies the need for further development. Humanity has survived for 300,000 years without it. It can wait a few more months or years for ethical artificial intelligence. Let HAL 9000 live in science fiction.

The Trump – MAGA Obstacle

When one of our brightest intellects warns that artificial intelligence could spell the end of humanity, it cannot be taken lightly. We need our best minds working on a means to regulate AI. Humans created this problem and it must be solved by humans using reason and factual evidence. Unfortunately, reason and facts are not the strong suit of Trump, his administration, or the MAGA Christian Nationalists. If there is any hope regulating artificial intelligence, the Democrats must take control of Congress in November and rein in an unstable president.

Bob Topper, syndicated by PeaceVoice, is a retired engineer.

AI is fuelling the 'digital colonisation' of Africa, warns UN scientist

The United Nations has launched its first global panel on artificial intelligence, as concerns grow that the technology could deepen global inequalities – particularly in Africa, where systems are largely imported after being shaped elsewhere.

Issued on: 05/04/2026 - RFI

The panel, bringing together around 40 experts from 37 countries, was approved by the UN General Assembly in February and held its first meeting in March. Members serve in their personal capacity for a three-year term.

It aims to help governments make sense of artificial intelligence (AI) as its reach quickly spreads across economies, politics and everyday life – and to close what the UN calls a growing “knowledge gap” around the technology.

Comparable with the IPCC climate change panel, it is designed to provide independent scientific advice and produce regular assessments of AI’s risks and impacts, at a time when a handful of companies, mostly in the United States and China, dominate the field.

Among its members is Senegalese researcher Adji Bousso Dieng, who tells RFI that Africa needs to develop its own AI or risk being left dependent on others.

RFI: What is this new UN panel on AI meant to achieve?

Adji Bousso Dieng: AI is advancing at an unprecedented speed and is now entering many parts of our societies – our economies, science, politics and even culture. Many governments and decision-makers feel uncertain.

They see the huge potential of AI, but they still struggle to fully understand its implications, how to use it for the common good and how to protect against its risks. That's why the UN created an independent scientific space. We do not work for any government or institution.

Our goal is to produce rigorous scientific analysis to guide public decisions. In the end, it is about rebalancing things so that AI governance and access to opportunities are not concentrated in the hands of a few actors, but benefit the whole international community.

RFI: AI is clearly dominated by major companies, especially in the United States. What's your view on this?

ABD: That is a reality. We keep hearing the same names – OpenAI, Anthropic, and now DeepSeek from China. Fortunately, other start-ups are emerging, including in Europe with Mistral. But it is a problem that AI development is concentrated in the hands of these companies.

AI has become a public good. Countries in the Global South should be able to develop their own AI, but they do not have the means. It requires huge resources in computing power, data and skills. So we need collaboration between nations, and this UN panel is a good way to start that work.

RFI: How is AI developing in Africa?

ABD: There are communities working on AI, like Indaba, and companies are starting to use it. But I am not satisfied with how it is happening. Most systems come from outside, they are not developed locally. That creates problems, especially bias.

Today’s most powerful AI systems are trained mainly on Western data, which does not reflect the diversity of populations. We need local AI systems built with local context, so they can solve local problems. Many systems try to give one single “best” answer, but that can lead to repetitive and biased results.

My research focuses on introducing diversity into AI, so it can explore multiple solutions and hypotheses. That is essential in science, because discovery is about exploring new ideas. We developed a mathematical tool called the 20-10 score to measure and guide this diversity, so AI becomes more exploratory, more creative and closer to scientific thinking.

RFI: Is it possible to develop a pan-African AI?

ABD: Yes, that is one of my goals. In many areas, we need a pan-African approach – but a practical one. Not just political slogans about sovereignty, but real collaboration to solve concrete problems in education, training and trade. We need this cooperation across the continent in all fields, including technology and AI.

RFI: You have spoken about a form of digital colonisation in Africa. What do you mean by that?

ABD: For example, companies go to countries like Kenya to label data, which is needed to train AI systems. The working conditions are often not fair, people are not well paid and they can be exposed to traumatic content.

There is no proper legal framework. That is a form of digital colonisation. There is also the issue of data sovereignty. Data can be used without compensation, and large companies benefit without paying Africans for their work.

RFI: Are people and governments aware of these risks?

ABD: I do not think so. There is a lot of enthusiasm for AI in Africa. People believe it will solve many problems – healthcare, education, jobs. But that is not entirely true. There is work to do to build local AI systems.

Right now, Africa risks repeating what has happened with natural resources – being a consumer rather than a creator. And I do not think this is discussed enough, especially by governments.

RFI: You were born in Senegal, studied in France and now work in the United States. What has guided your journey?

ABD: I have been very lucky to have experiences in Senegal, France and the United States. What has stayed constant is my love of knowledge. I am very curious and passionate about science. The work we are doing at Princeton is something I truly believe in.

AI should not just be a prediction tool for chatbots like ChatGPT or Gemini. It can become a partner in discovery, helping solve major global challenges. That is what motivates me.

RFI: You founded the NGO The Africa I Know to encourage young people, especially girls, to go into science and AI. How does it work?

ABD: The idea is to give young Africans the tools to become creators of technology, not just consumers. We do this through inspiration, with videos about Africans succeeding in AI and other STEM fields. We also run a summer camp.

Students learn both the opportunities and limits of AI, then the basics, and then work in groups on a project to solve a local problem using AI. They are incredibly creative. It is important they build their own technologies, because they understand their communities’ problems better than anyone else.

RFI: Despite the risks, are you optimistic?

ABD: Yes, I am optimistic about using AI for science. Traditional research can take a long time. AI can speed up the discovery of new molecules or materials for energy, climate, health and agriculture.

But there are also risks. Chatbots can be addictive and make people too dependent. There is a danger of losing critical thinking and creativity.

This interview has been adapted from the original version in French and lightly edited for clarity.

No comments:

Post a Comment