Russian embassy shares AI-generated image of Julian Assange in prison

Aude Dejaifve

Mon, 10 April 2023

© Observers

The Russian Embassy in Kenya shared an image on Twitter on April 6 showing an exhausted-looking Julian Assange, the founder of WikiLeaks, who has been incarcerated in the United Kingdom since 2019. However, it turns out that one of his supporters generated the image using artificial intelligence.

If you only have a minute:

The Russian Embassy in Kenya tweeted an image of a weak and ill-looking Julian Assange in a bid to complain about the embattled Wikileaks founder’s conditions of imprisonment in the UK.

However, we noticed some aspects of the image that made us suspect that it wasn’t an actual photo but rather a fake image.

It turns out that a Twitter account that supports Assange used artificial intelligence to generate the image in order to raise awareness about his imprisonment.

The fact-check, in detail

The same image was shared on March 31 in a tweet in French, which has since garnered more than 191,000 views.

Artist uses AI to reimagine world’s most famous billionaires if they were born poor

Namita Singh

Mon, 10 April 2023

An Indian artist used artificial intelligence to create portraits of famous billionaires – from Elon Musk to Mukesh Ambani – imagining the world’s wealthiest people as poor.

Digital artist Gokul Pillai used artificial intelligence programme Midjourney to create portraits of Bill Gates, Mark Zuckerberg, Warren Buffett, Jeff Bezos, Elon Musk, and Mukesh Ambani as “slumdog billionaires”.

His portrayal of the Microsoft co-founder as a lanky old man standing bare-chested outside a shanty, wearing nothing but a grey colored loin cloth, was liked over 10,000 times.

Indian billionaire Ambani, in this alternative reality, is not dressed in his tweed suit but instead wearing an old, seemingly unwashed and oversized T-shirt and blue trousers, as he stood next to a garbage dump.

His post also featured the AI-generated image of Meta chief Zuckerberg in a dusty T-shirt and blue shorts standing in the middle of a slum, while American businessman Buffet is seen wearing a slightly dirty white T-shirt tucked into unzipped trousers.

“This is epic,” wrote a user.

“Warren Buffet is looking rich here as well,” said another.

Earlier this month, AI-generated images of Mr Zuckerberg walking the ramp in flashy Louis Vuitton clothes, flooded social media.

In March, AI enthusiast Jyo John Mulloor posted a series of images on Instagram portraying Game of Thrones characters Daenerys Targaryen, Jon Snow, and Arya Stark in royal Indian attire.

"If George RR Martin has hired an Indian costume designer for Game of Thrones (sic)," he captioned.

An AI-generated image of Pope Francis looking stylish in a large white puffer coat also went viral on social media earlier last month, leaving viewers shocked.

Many social media users, including model Chrissy Teigen, expressed confusion over whether or not the fashionable photograph was real.

“I thought the Pope’s puffer jacket was real and didn’t give it a second thought. No way am I surviving the future of technology,” wrote Teigen.

Another AI-generated image showing Mr Trump wearing an orange prison jumpsuit went viral amid the 34 felony charges brought against him for allegedly falsifying business records related to hush money payment to adult film star Stormy Daniels in the run up to the 2016 presidential elections.

Fake images have sparked concern among lawmakers and experts who fear it could spread harmful disinformation.

Why a fake Pope picture could herald the end of humanity

Matthew Field

Mon, April 10, 2023

AI-generated fake image of the Pope in white puffer jacket fooled the internet - Pablo Xavier

For a moment the internet was fooled. An image of Pope Francis in a gleaming white, papal puffer jacket spread like wildfire across the web.

Yet the likeness of the unusually dapper 86-year-old head of the Vatican was a fake. The phoney picture had been created as a joke using artificial intelligence technology, but was realistic enough to trick the untrained eye.

AI fakes are quickly spreading across social media as the popularity of machine-learning tools surges. As well as invented images, chatbot-based AI tools such as OpenAI’s ChatGPT, Google’s Bard and Microsoft’s Bing have been accused of creating a new avenue for misinformation and fake news.

These bots, trained on billions of pages of articles and millions of books, can provide convincing-sounding, human-like responses, but often make up facts - a phenomenon known in the industry as hallucinating. Some AI models have even been taught to code, unleashing the possibility they could be used for cyber attacks.

On top of fears about false news and “deep fake” images, a growing number of future gazers are concerned that AI is turning into an existential threat to humanity.

Scientists at Microsoft last month went so far as to claim one algorithm, ChatGPT-4, had “sparks of… human-level intelligence”. Sam Altman, the creator of OpenAI, the US start-up behind the ChatGPT technology, admitted in a recent interview: “We are a little bit scared of this”.

Now, a backlash against so-called “generative AI” is brewing as Silicon Valley heavyweights clash over the risks, and potentially infinite rewards, of this new technological wave.

A fortnight ago, more than 3,000 researchers, scientists and entrepreneurs including Elon Musk penned an open letter demanding a six month “pause” on development of OpenAI’s most advanced chatbot tool, a so-called “large language model”, or LLM.

Elon Musk - Taylor Hill/Getty Images

“We call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4,” the scientists wrote. “If such a pause cannot be enacted quickly, governments should step in and institute a moratorium.”

Musk and others fear the destructive potential of AI, that an all-powerful “artificial general intelligence” could have profound dangers for humanity.

But their demand for a six-month ban on developing more advanced AI models has been met with scepticism. Yann LeCun, the top AI expert at Facebook-parent company Meta, compared the attempt to the Catholic church trying to ban the printing press.

“Imagine what could happen if the commoners get access to books,” he said on Twitter.

"They could read the Bible for themselves and society would be destroyed.”

Others have pointed out that several of the letter's signatories have their own agenda. Musk, who has openly clashed with OpenAI, is looking to develop his own rival project. At least two signatories were researchers from DeepMind, which is owned by Google and working on its own AI bots. AI sceptics have warned the letter buys into the hype around the latest technology, with statements such as “should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us?”

Many of the AI tools currently in development are effectively black boxes, with little public information on how they actually work. Despite this, they are already being incorporated into hundreds of businesses. OpenAI’s ChatGPT, for instance, is being harnessed by the payments company Stripe, Morgan Stanley bank and buy-now-pay-later company Klarna.

Richard Robinson, founder of legal start-up RobinAI, which is working with $4bn AI company Anthropic, another OpenAI rival, says even the builders of large language models don’t fully understand how they work. However, he adds: "I also think that there’s a real risk regulators will overreact to these developments.”

Government watchdogs are already limbering up for a fight with AI companies over privacy and data worries. In Europe, Italy has threatened to ban ChatGPT over claims it has scraped information from across the web with little regard for consumers' rights.

Italy’s privacy watchdog said the bot had “no age verification system” to stop access by under 18s. Under European data rules, the regulator can impose fines of up to €20m (£18m), or 4pc of OpenAI’s turnover, unless it changes its data practices.

In response, OpenAI said it had stopped offering ChatGPT in Italy. “We believe we offer ChatGPT in compliance with GDPR and other privacy laws,” the company said.

France, Ireland and Germany are all examining similar regulatory crackdowns. In Britain, the Information Commissioner’s Office said: “There really can be no excuse for getting the privacy implications of generative AI wrong.”

However, while the privacy watchdog has raised red flags, so far the UK has not gone as far as to threaten to ban ChatGPT. In an AI regulation white paper published earlier this month, the Government decided against a formal AI regulator. Edward Machin, a lawyer at the firm Ropes & Gray, says: “The UK is striking its own path, it is taking a much lighter approach.”

Several AI experts told The Telegraph that the real concerns about ChatGPT, Bard and others were less about the long-term consequences of some kind of killer, all-powerful AI, but the damage it could do in the here and now.

Juan José López Murphy, head of AI and data science at tech company Globant, says there are near-term issues with helping people spot deep fakes or false information generated by chatbots. “That technology is already here… it is about how we misuse it,” he says.

“Training ChatGPT on the whole internet is potentially dangerous due to the biases of the internet,” says computer expert Dame Wendy Hall. She suggests calls for a moratorium on development would likely be ineffective, since China is rapidly developing its own tools.

OpenAI appears alive to the possibility of a crackdown. On Friday, it posted a blog which said: “We believe that powerful AI systems should be subject to rigorous safety evaluations. Regulation is needed to ensure that such practices are adopted.”

Marc Warner, of UK-based Faculty AI, which is working with OpenAI in Europe, says regulators will still need to plan with the possibility that a super powerful AI may be on the horizon.

“It seems general artificial intelligence might be coming sooner than many expect,” he says, urging labs to stop the rat race and collaborate on safety.

“We have to be aware of what could happen in the future, we need to think about regulation now so we don’t develop a monster,” Dame Wendy says.

“It doesn’t need to be that scary at the moment… That future is still a long way away, I think.

Fake images of the Pope might seem a long way from world domination. But, if you believe the experts, the gap is starting to shrink.

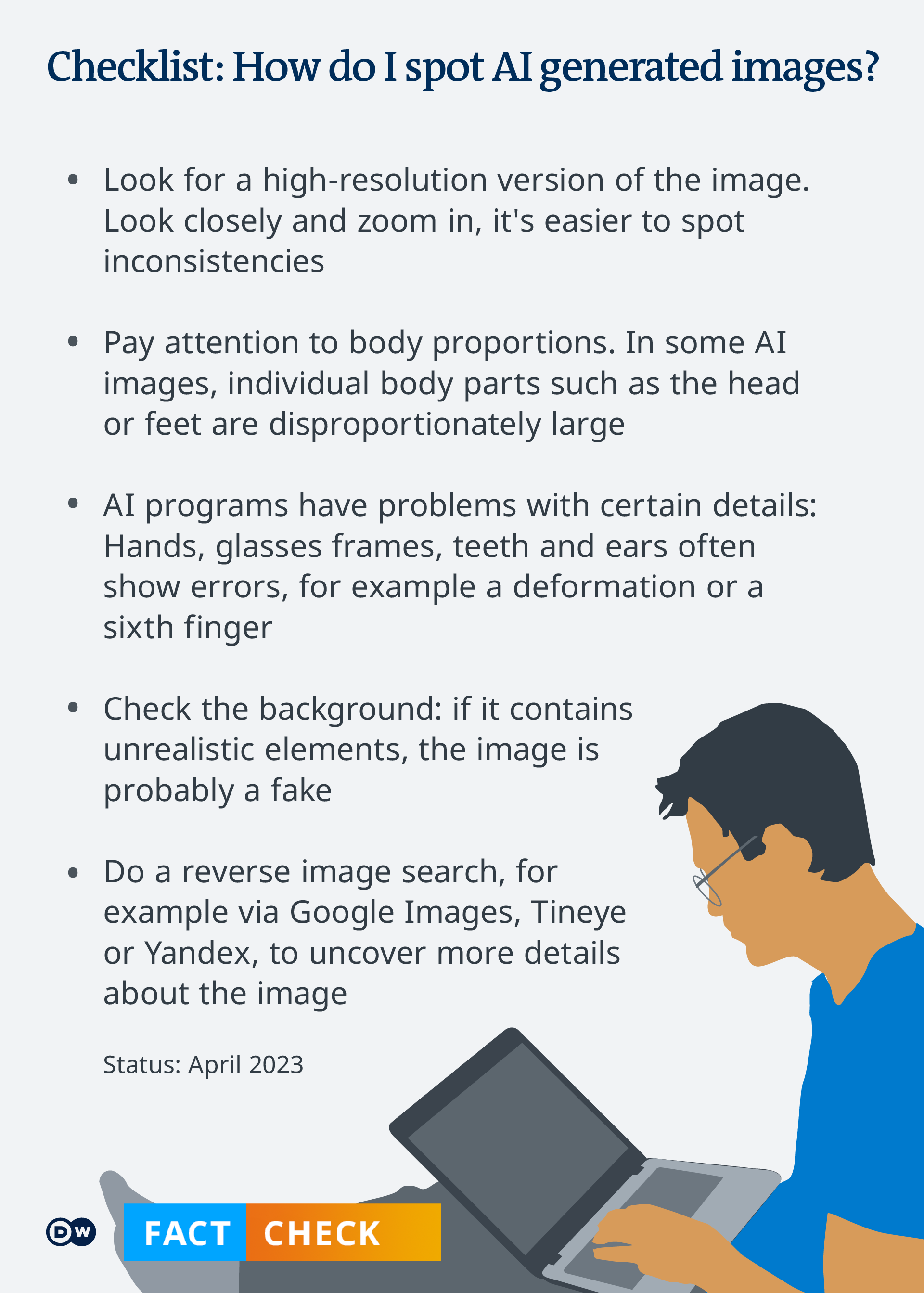

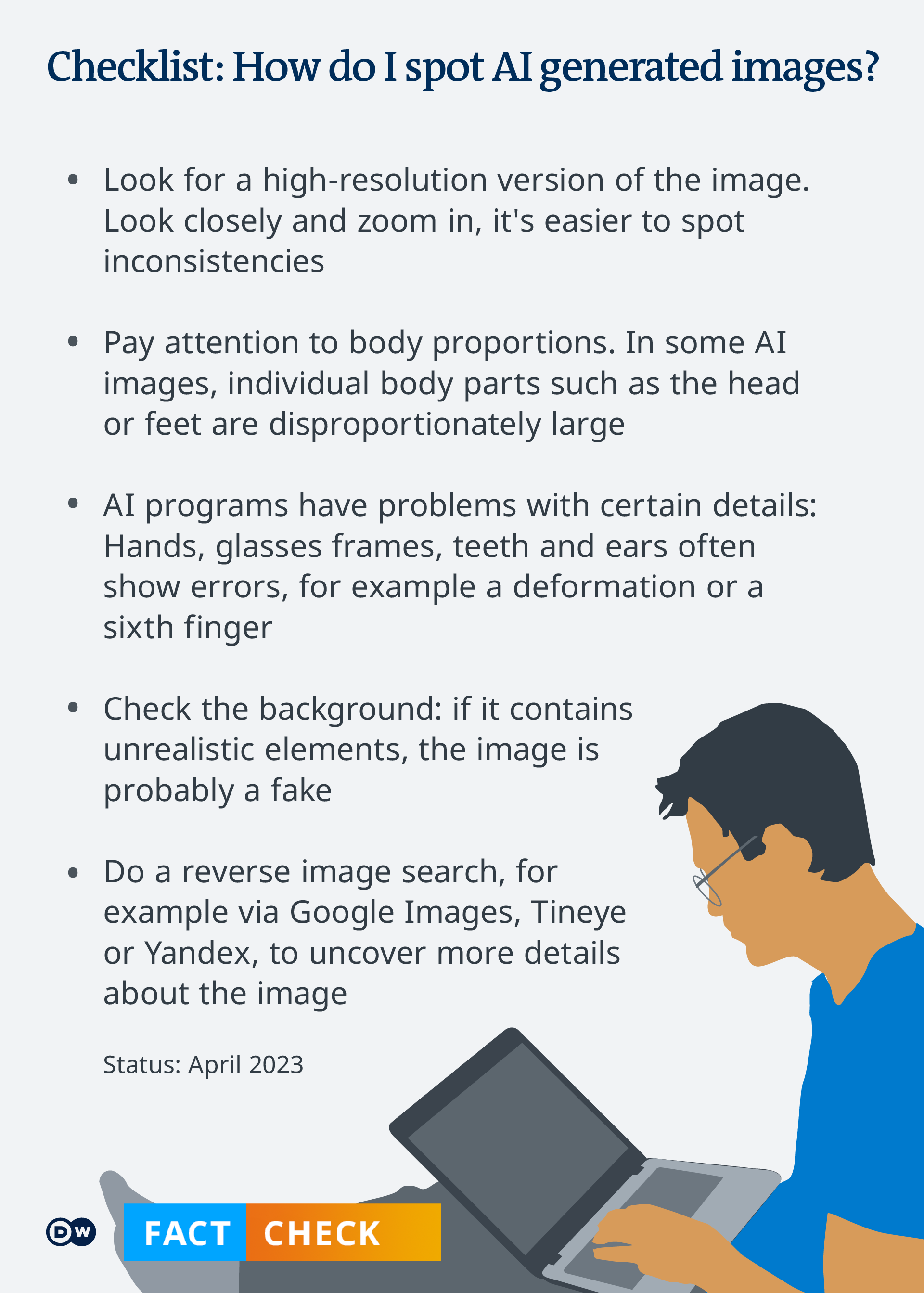

Fact check: How can I spot AI-generated images?

Joscha Weber | Kathrin Wesolowski | Thomas Sparrow

DW

April 9, 2023

Midjourney, DALL-E, DeepAI — images created with artificial intelligence tools are flooding social media. Some carry the risk of spreading false information. Which images are real and which are not? Here are a few tips.

https://p.dw.com/p/4PnBK

It has never been easier to create images that look shockingly realistic but are actually fake.

Anyone with an internet connection and access to a tool that uses artificial intelligence (AI) can create photorealistic images within seconds, and they can then spread them on social networks at breakneck speed.

In the last few days, many of these images became viral: Vladimir Putin apparently being arrested or Elon Musk holding hands with General Motors CEO Mary Barra, just to name two examples.

The problem is that both AI images show events that never happened. Even photographers have published portraits that turn out to be images created with artificial intelligence.

And while some of these images may be funny, they can also pose real dangers in terms of disinformation and propaganda, according to experts consulted by DW.

This AI-generated viral photo purports to show Elon Musk with GM CEO Mary Barra.

It is fake

An earthquake that never happened

Pictures showing the arrest of politicians like Russian President Vladimir Putin or former US President Donald Trump can be verified fairly quickly by users if they check reputable media sources.

Other images are more difficult, such as those in which the people on the picture are not so well-known, AI expert Henry Ajder told DW.

One example: a German member of Parliament for the far-right AfD party spread an AI-generated image of screaming men on his Instagram account in order to show he was against the arrival of refugees.

And it's not just AI-generated images of people that can spread disinformation, according to Ajder.

He says there have been examples of users creating events that never happened.

This was the case with a severe earthquake that is said to have shaken the Pacific Northwest of the United States and Canada in 2001.

But this earthquake never happened, and the images shared on Reddit were AI-generated.

And this can be a problem, according to Ajder. "If you're generating a landscape scene as opposed to a picture of a human being, it might be harder to spot," he explains.

However, AI tools do make mistakes, even if they are evolving rapidly. Currently, as of April 2023, programs like Midjourney, Dall-E and DeepAI have their glitches, especially with images that show people.

DW’s fact-checking team has compiled some suggestions that can help you gauge whether an image is fake. But one initial word of caution: AI tools are developing so rapidly that these tips only reflect the current state of affairs.

1. Zoom in and look carefully

Many images generated by AI look real at first glance.

That's why our first suggestion is to look closely at the picture. To do this, search for the image in the highest-possible resolution and then zoom in on the details.

Enlarging the picture will reveal inconsistencies and errors that may have gone undetected at first glance.

2. Find the image source

If you are unsure whether an image is real or generated by AI, try to find its source.

You may be able to see some information on where the image was first posted by reading comments published by other users below the picture.

Or you may carry out a reverse image search. To do this, upload the image to tools like Google Image Reverse Search, TinEye, or Yandex, and you may find the original source of the image.

The results of these searches may also show links to fact checks done by reputable media outlets which provide further context.

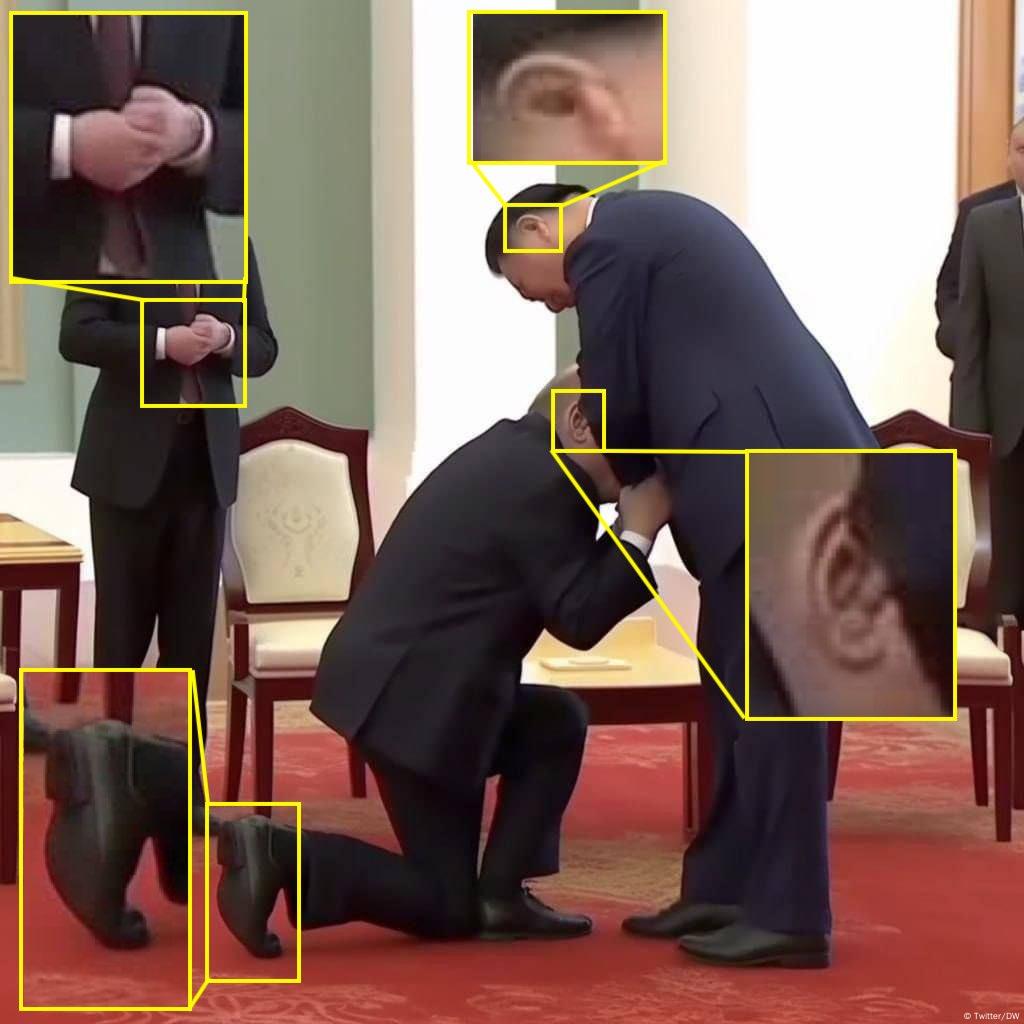

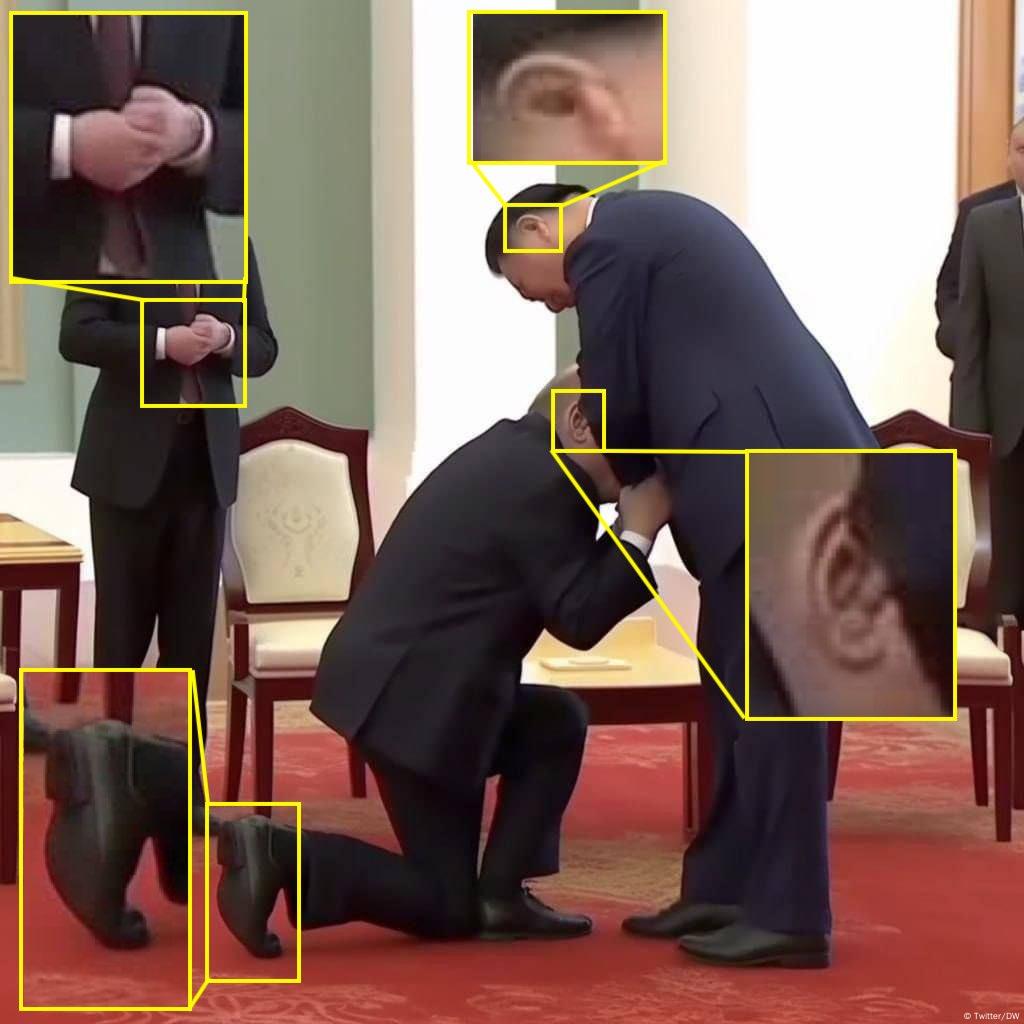

Putin is supposed to have knelt down in front of Xi Jinping, but a closer looks shows that the picture is fake

Image: Twitter/DW

3. Pay attention to body proportions

Do the depicted people have correct body proportions?

It is not uncommon for AI-generated images to show discrepancies when it comes to proportions. Hands may be too small or fingers too long. Or the head and feet do not match the rest of the body.

This is the case with the picture above, in which Putin is supposed to have knelt down in front of Xi Jinping. The kneeling person’s shoe is disproportionately large and wide. The calf appears elongated. The half-covered head is also very large and does not match the rest of the body in proportion.

4. Watch out for typical AI errors

Hands are currently the main source of errors in AI image programs like Midjourney or DALL-E.

People frequently have a sixth finger, such as the policeman to Putin's left in our picture at the very top.

Or also in these pictures of Pope Francis, which you’ve probably seen.

But did you realize that Pope Francis seems to only have four fingers in the right picture? And did you notice that his fingers on the left are unusually long? These photos are fake.

Other common errors in AI-generated images include people with far too many teeth, or glasses frames that are oddly deformed, or ears that have unrealistic shapes, such as in the aforementioned fake image of Xi and Putin.

Surfaces that reflect, such as helmet visors, also cause problems for AI programs, sometimes appearing to disintegrate, as in the alleged Putin arrest.

AI expert Henry Ajder warns, however, that newer versions of programs like Midjourney are becoming better at generating hands, which means that users won’t be able to rely much longer on spotting these kinds of mistakes.

5. Does the image look artificial and smoothed out?

The app Midjourney in particular creates many images that seem too good to be true.

Follow your gut feeling here: Can such a perfect image with flawless people really be real?

"The faces are too pure, the textiles that are shown are also too harmonious," Andreas Dengel of the German Research Center for AI told DW.

People’s skin in many AI images is often smooth and free of any irritation, and even their hair and teeth are flawless. This is usually not the case in real life.

Many images also have an artistic, shiny, glittery look that even professional photographers have difficulty achieving in studio photography.

AI tools often seem to design ideal images that are supposed to be perfect and please as many people as possible.

6. Examine the background

The background of an image can often reveal whether it was manipulated.

Here, too, objects can appear deformed; for example, street lamps.

In a few cases, AI programs clone people and objects and use them twice. And it is not uncommon for the background of AI images to be blurred.

But even this blurring can contain errors. Like the example above, which purports to show an angry Will Smith at the Oscars. The background is not merely out of focus but appears artificially blurred.

Conclusion

Many AI-generated images can currently still be debunked with a little research. But technology is getting better and mistakes are likely to become rarer in the future. Can AI detectors like Hugging Face help us detect manipulation?

Based on our findings, detectors provide clues, but nothing more.

The experts we interviewed tend to advise against their use, saying the tools are not developed enough. Even genuine photos are declared fake and vice versa.

Therefore, in case of doubt, the best thing users can do to distinguish real events from fakes is to use their common sense, rely on reputable media and avoid sharing the pictures.

Joscha Weber Editor and fact-checker focusing on separating facts from fiction and uncovering disinformation.