Anthropic’s CEO has expressed concerns about the use of AI for autonomous drones and surveillance.

By Sharon Zhang , TruthoutPublishedFebruary 25, 2026

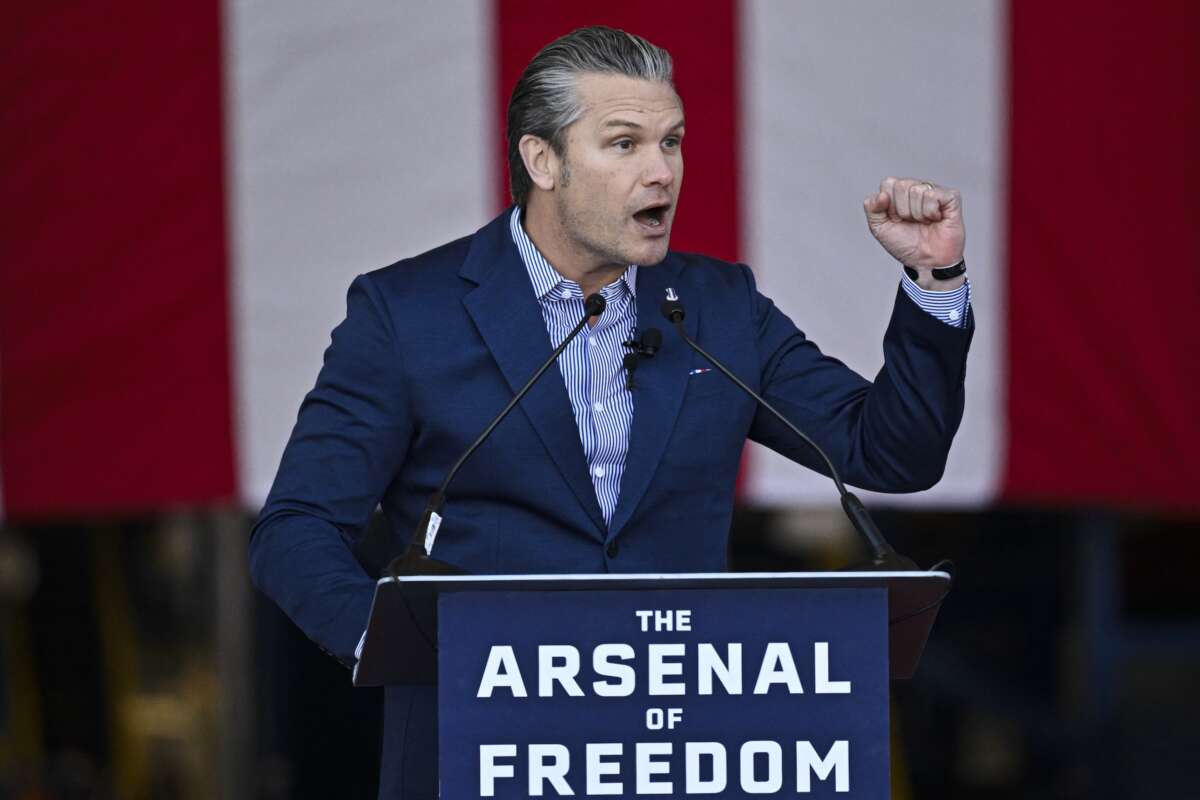

U.S. Defense Secretary Pete Hegseth speaks at Blue Origin in Cape Canaveral, Florida, on February 2, 2026.Miguel J. Rodriguez Carrillo / AFP via Getty Images

Secretary of Defense Pete Hegseth has threatened Antropic with blacklisting if the AI company refuses to allow its tools to be used for autonomous drone attacks or mass surveillance – a chilling show of the Pentagon’s priorities.

In a meeting with the company on Tuesday, Hegseth said that the company must lift its demands for the safety restrictions by Friday at 5:01 pm. Otherwise, officials warned, the Pentagon will declare the company a “supply chain risk” and effectively blacklist it — or, paradoxically, it will invoke the Defense Production Act to force Antropic to comply.

Sources familiar with the meeting have said that the company’s representatives at the meeting expressed safety concerns over AI’s ability to reliably control weapons. A lack of regulations over AI use in mass surveillance could also pose risks, they reportedly told officials.

The company’s CEO, Dario Amodei, has repeatedly voiced concerns over these issues.

“I am worried about the autonomous drone swarm, right? The constitutional protections in our military structures depend on the idea that there are humans who would, we hope, disobey illegal orders. With fully autonomous weapons, we don’t really have those protections,” Amodei said in an interview with podcaster Wes Roth.

Prisons & Policing

Secretary of Defense Pete Hegseth has threatened Antropic with blacklisting if the AI company refuses to allow its tools to be used for autonomous drone attacks or mass surveillance – a chilling show of the Pentagon’s priorities.

In a meeting with the company on Tuesday, Hegseth said that the company must lift its demands for the safety restrictions by Friday at 5:01 pm. Otherwise, officials warned, the Pentagon will declare the company a “supply chain risk” and effectively blacklist it — or, paradoxically, it will invoke the Defense Production Act to force Antropic to comply.

Sources familiar with the meeting have said that the company’s representatives at the meeting expressed safety concerns over AI’s ability to reliably control weapons. A lack of regulations over AI use in mass surveillance could also pose risks, they reportedly told officials.

The company’s CEO, Dario Amodei, has repeatedly voiced concerns over these issues.

“I am worried about the autonomous drone swarm, right? The constitutional protections in our military structures depend on the idea that there are humans who would, we hope, disobey illegal orders. With fully autonomous weapons, we don’t really have those protections,” Amodei said in an interview with podcaster Wes Roth.

Prisons & Policing

Super Bowl Ad for Ring Cameras Touted AI Surveillance Network

Ring’s AI-powered network is likely to be used in its partnerships with law enforcement and agencies like ICE. By Sharon Zhang , Truthout February 9, 2026

Amodei also worries that AI could access and process private conversations captured by technology within people’s homes that could be used to label people politically and “undermine” the Fourth Amendment.

However, Anthropic announced after its meeting with Hegseth that it is dropping a central safety policy that would put guardrails on its AI development to mitigate risks posed to society by AI. It’s unclear if the changes are related to the Pentagon’s demands, but the timing raises suspicion.

Legal experts have said it’s unclear if the Trump administration could use the Defense Production Act to force Anthropic’s hand.

Anthropic is in negotiations for a contract with the Pentagon, and has reportedly previously offered to allow its AI systems to be used for missile and cyber defense. However, the Pentagon is saying that the company must allow use of its tools for all military purposes.

The company’s AI model Claude was reportedly used by the Pentagon during its operation to bombard Caracas and abduct Venezuelan President Nicolás Maduro, an operation that killed 83 people, including civilians. A Wall Street Journal report, citing sources familiar, said that the Pentagon made use of Claude through Anthropic’s partnership with Palantir, which has a contract with the U.S. government.

A Pentagon official said in a statement that Hegseth’s demands have “nothing to do with mass surveillance and autonomous weapons being used,” but the Trump administration has doggedly worked to overstep legal authorities to inflict more violence and surveillance of Americans.

“I want to clarify what responsible AI means at the Department of War. Gone are the days of equitable AI, and other DEI and social justice infusions that constrain and confuse our employment of this technology,” Hegseth said during an address at SpaceX’s headquarters in January. “We will not employ AI models that won’t allow you to fight wars.”

Experts have warned that the use of AI models for warfare is dangerous. A recent study in which a researcher pitted ChatGPT, Claude, and Gemini models against each other in 21 war scenarios found that one of the models deployed a nuclear weapon in 95 percent of the simulated games.

No comments:

Post a Comment