The latest technology can prove decisive in war. Think of the atomic bomb in World War II. Or the stirrup in the Mongol conquest of Europe and the Middle East.

More recently, after the two sides had been deadlocked for decades, Azerbaijan defeated Armenia in 2020 in a matter of days and took over the enclave of Nagorno-Karabakh. Armenia prided itself on its powerful army and fearsome soldiers. They were no match for the drones that Azerbaijan bought with the proceeds from its oil exports.

“Azerbaijan used its drone fleet — purchased from Israel and Turkey — to stalk and destroy Armenia’s weapons systems in Nagorno-Karabakh, shattering its defenses and enabling a swift advance,” reported the Washington Post‘s Robyn Dixon. “Armenia found that air defense systems in Nagorno-Karabakh, many of them older Soviet systems, were impossible to defend against drone attacks, and losses quickly piled up.”

Ukraine has similarly used drone technology to level the battlefield in its war against Russia. The Kremlin has more money, more soldiers, more heavy artillery, even more drones than Ukraine. But the Ukrainians have proven more adept at producing new varieties of drones that can substitute for scarce Patriot missiles in defending against Russia’s daily aerial assault. Ukraine has also used a variety of drones to strike at targets deep in Russian territory. Drones are the slingshot by which little David hopes to bring down the Russian Goliath.

And now the war in Iran.

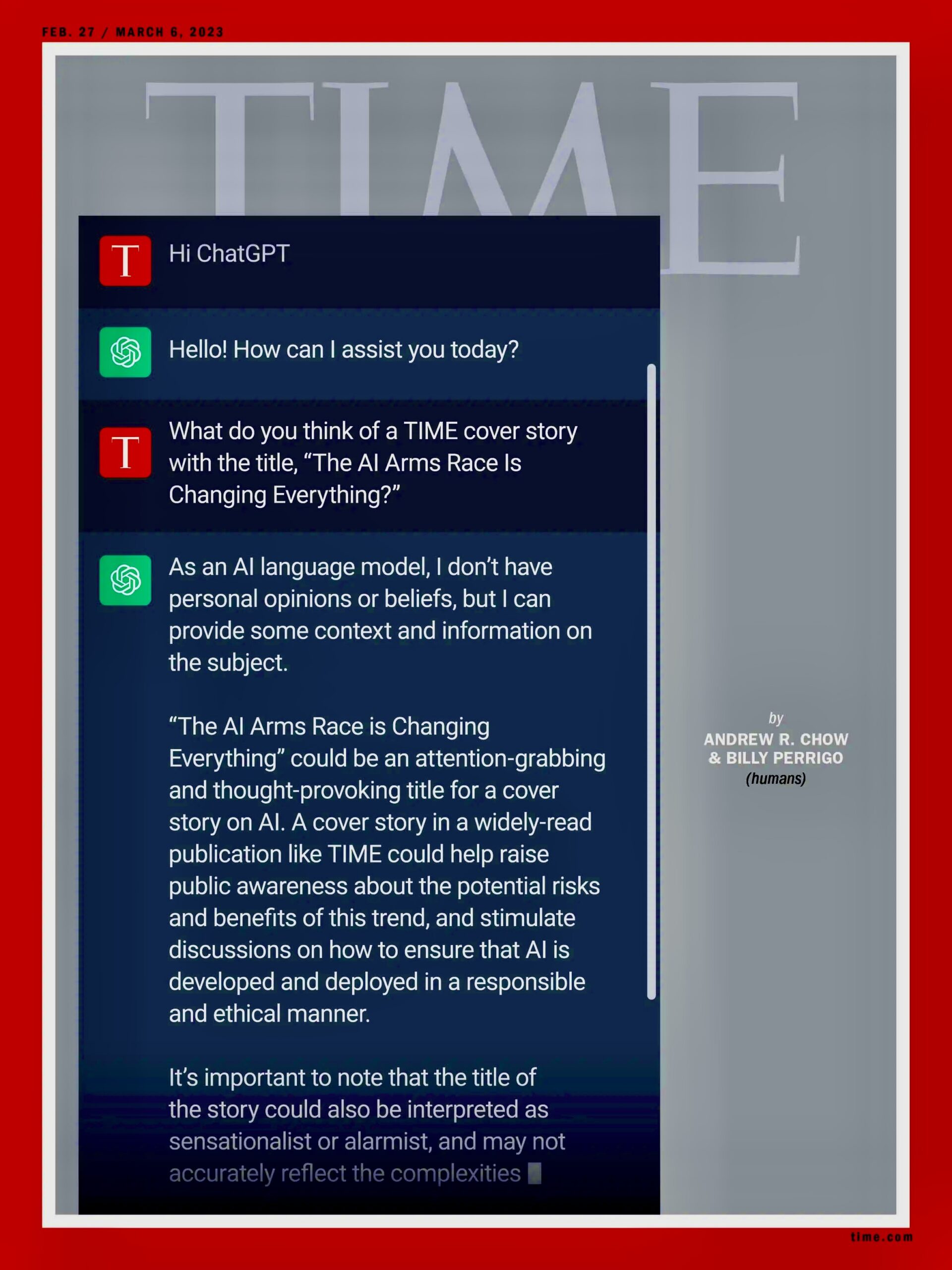

Perhaps Donald Trump was persuaded—by his generals, by his buddies in Silicon Valley, by Israeli Prime Minister Benjamin Netanyahu—that American military superiority would make quick work of the Iranian military. In addition to the aircraft carriers, the Stealth bombers, the Tomahawk missiles, and the High Mobility Artillery Rocket System, Trump could also call upon the assistance of Claude and his buddies.

Claude, of course, is the artificial intelligence system developed by the company Anthropic, which had objected to the misuse of its model in the U.S. raid that captured Venezuelan leader Nicolas Maduro and his wife. Trump retaliated against Anthropic’s caution by ordering the Pentagon to sever its relationship with Claude—only to discover that the AI was already too integrated into U.S. military operations. Not the first conscript ordered to fight against its will, Claude helped the Pentagon identify Iranian targets, prioritize them, and furnish precise coordinates. Going forward, however, the Pentagon will rely instead on Open AI’s ChatGPT.

All of this technological sophistication has not brought Donald Trump the quick victory he so desired. What Trump and company didn’t anticipate—but which any reasonably competent foreign policy professional could have pointed out if DOGE hadn’t cashiered so many of them—was that Iran could rely on much simpler tactics to stymie the combined U.S.-Israeli forces.

History provides plenty of examples of adversaries who successfully defeated U.S. forces despite facing much more technologically advanced weaponry. The Vietnamese endured massive bombing campaigns, Iraqi insurgents relied on IEDs to destroy U.S. infantry forces, and the Taliban outwaited the occupying army. These experiences presumably inspired Donald Trump to promise, as a presidential candidate, not to get involved in any quagmires or expose U.S. troops to such risks again.

All that went out the window when he attacked Iran.

And now, Iran has used its location and the unchangeable facts of geography to their full advantage. It has effectively blocked the Strait of Hormuz, restricted the flow of oil and natural gas to the global market, and driven up the price of petroleum at the pump. It. It has also relied on the utter stupidity of the industrialized world. If global gas-guzzlers had weaned themselves of their addiction to fossil fuels—as they had promised in climate negotiations—the reduced flow of oil would not now be having such a great impact.

To turn the tables, the United States could seize Kharg Island, located in the Persian Gulf about 400 miles north of the Strait of Hormuz. If Iran were to lose the island, the transit point for 90 percent of Iran’s crude oil exports, much of its geographic advantage would disappear. Or would it?

Although it might not represent a huge challenge for the United States to take the island, it’s another matter altogether to hold it. The Iranians could keep up a steady barrage of aerial strikes on occupation forces hunkered down on the exposed island. The continued disruption of Strait traffic—along with the destruction of Iranian energy infrastructure—would not achieve Trump’s current primary goal: the reduction of prices at the pump.

What might seem like a war between two distinct adversaries—Armenia vs. Azerbaijan, Russia vs. Ukraine, United States vs. Iran—often boils down to a very different kind of conflict. The battle on the ground frequently pits old tactics against new tech. Sometimes the gadgets win; sometimes old-school approaches prevail.

Many countries still go to war believing that God is on their side. Just as dangerous are those that believe that they will win because technology is on their side.

Taking Humans out of the Loop

The era of the “intelligent kill web” has arrived.

The military planner sits like a venomous spider at the center of a web of AI applications that calculate targets, probabilities, and complex interactions faster than any human can comprehend. Linked to actual weapons, these AI models conduct war with ever increasing efficiency and lethality. The execution of kill chains—which connect the identification of a target with its destruction—has been compressed to mere seconds. In a targeting exercise conducted by the U.S. Air Force in January, the AI system was over 100 times faster than its human counterpart; it also achieved a “tactical viability” rate of 97 percent compared to the human’s 48 percent.

Such figures are no consolation to the families of the victims of the U.S. bombing of a primary school in Iran on February 28 that killed nearly 200 people, mostly little girls. The targeting of the U.S. aerial campaign was orchestrated by Maven, the AI platform designed by Palantir. But don’t blame the robots. As Kevin Baker points out in The Guardian, it’s people who are responsible for catastrophes like this: the ones who failed to update the database of targets, who designed Maven, and who put these systems at the center of their battle plans.

Analysts worry that countries like the United States are on the verge of removing people from the kill web because the human mind just slows things down and the smallest advantage can prove critical in determining the outcome of a battle.

That is certainly a concern. Equally terrifying, as the war in Iran is proving, is to keep a human being like Secretary of War Pete Hegseth at the center of the kill web. In other words, the only thing worse than an intelligent kill web is a stupid kill web.

Or, to put it more dismally, any human at the center of the kill web will be as stupid as Pete Hegseth because that’s just a function of the degree of magnitude that currently separates cyberspace and meatspace.

Will AI, without human guidance, escalate a war to the nuclear threshold and beyond? This is not a consoling thought, but at this point, frankly, the humans that run operations in the Trump administration are not morally distinguishable from killer robots.

Cyberoperations

To assassinate Iran’s top leader Ayatollah Khamenei, Israeli cyber-operatives hacked into the traffic cameras in Tehran. According to a Financial Times report:

Israel gained access to the cameras years ago, and found that one particular camera was angled in such a way that it showed where members of Khamenei’s security team parked their cars. Through the cameras, Israeli intelligence built files on the guards’ addresses, work schedules, and who they were assigned to protect. On the day of the attack, Israel and the US also disrupted cellular service on Tehran’s Pasteur Street, where Khamenei was assassinated, so those trying to reach the bodyguards and deliver possible warnings would receive busy signals.

Some years ago, the United States and Israel collaborated on smuggling the Stuxnet worm into Iran’s nuclear operations, which prompted the centrifuges that enrich uranium to spin out of control and destroy themselves. It was only a temporary setback for Iran. For the world, however, the consequences have been irreversible, given that this first large-scale cyberattack kicked off a digital arms race.

During this current war, Iran has conducted cyberoperations of its own, such as targeting a medical devices company and hacking into FBI Director Kash Patel’s email. However, Iran is at a serious disadvantage. The United States and Israel have been pouring money into such technologies for years.

So, too, has the Kremlin. Russian cyberops are especially widespread. U.S. media has focused on Russian efforts to swing U.S. elections, but Russia has focused most of its attention on Europe. There it has engaged in conventional sabotage, such as hiring single-use operatives to plant explosives, set fires, and generally cause havoc. The even more destabilizing operations, however, are hidden from view because they take place in cyberspace.

Beginning in September 2024, for instance, a new Russian group nicknamed Laundry Bear began to hack into the accounts of Dutch police officials and conduct cyber-espionage against high-tech companies. The Baltic countries have been dealing for years with Russian cyber operations that have jammed GPS navigation near airports, disrupted underwater cables, and hacked into energy systems. In one recent example, anonymous social media accounts started to call for the secession of a majority-Russian region around the Estonian city of Narva. The historical parallels are unnerving. Similar calls for secession, also stage-managed by Moscow, precipitated the Crimean and Donbas crises in 2014 that led to Russian intervention and war in Ukraine.

The Genie and the Bottle

Scientists warn against the futility of trying to stop scientific advances. The nuclear genie, despite some intermittent efforts at imposing controls, has not been stuffed back into the bottle, not even halfway. A similar debate is taking place today around AI, between techno-optimists and techno-pessimists.

One way around this debate has been to furnish AI with hard-and-fast constraints, something like the laws that Isaac Asimov imagined for his fictional robots. Anthropic has drafted a “constitution” for Claude that forbids it from, among other things, creating “cyberweapons or malicious code that could cause significant damage if deployed” or engaging “in an attempt to kill or disempower the vast majority of humanity or the human species as a whole.”

That seems reasonable. But when Donald Trump took office in 2025, he eliminated all efforts to apply such rules across the industry. Rules, regulations, laws—these are all impediments to “making America great again” or, more accurately, to preventing Trump from assuming autocratic control. So, Claude and its constitution are now out of the loop, much as Trump has taken the U.S. constitution out of the loop.

In any case, talk of techno-utopias and techno-apocalypses is really just a matter of projection. AI reflects the best and worst of humanity. “Garbage in, garbage out,” goes the slogan of Silicon Valley. Instead of just focusing on treating the end product, then, it would be better to address the waste farther upstream, nearer to the source. That means more funds for education, not just for science and technology but the ethics involved in translating discoveries into products.

Ah, but wait: the Trump administration is also cutting the funding to research and education. The secretary of health and human services is a fount of junk science. The executive branch is governed by the morality of Mordor.

It is a horrifying aspect of today’s politics in the United States that AI is an improvement on all that. Intelligence of whatever variety trumps stupidity almost every time, though not so much in U.S. elections.

Speaking of elections, now that Claude has been kicked out of the Pentagon, maybe it should run for president.

Cambridge, MA -- Artificial intelligence is increasingly being used to help optimize decision-making in high-stakes settings. For instance, an autonomous system can identify a power distribution strategy that minimizes costs while keeping voltages stable.

But while these AI-driven outputs may be technically optimal, are they fair? What if a low-cost power distribution strategy leaves disadvantaged neighborhoods more vulnerable to outages than higher-income areas?

To help stakeholders quickly pinpoint potential ethical dilemmas before deployment, MIT researchers developed an automated evaluation method that balances the interplay between measurable outcomes, like cost or reliability, and qualitative or subjective values, such as fairness.

The system separates objective evaluations from user-defined human values, using a large language model (LLM) as a proxy for humans to capture and incorporate stakeholder preferences.

The adaptive framework selects the best scenarios for further evaluation, streamlining a process that typically requires costly and time-consuming manual effort. These test cases can show situations where autonomous systems align well with human values, as well as scenarios that unexpectedly fall shortv of ethical criteria.

“We can insert a lot of rules and guardrails into AI systems, but those safeguards can only prevent the things we can imagine happening. It is not enough to say, ‘Let’s just use AI because it has been trained on this information.’ We wanted to develop a more systematic way to discover the unknown unknowns and have a way to predict them before anything bad happens,” says senior author Chuchu Fan, an associate professor in the MIT Department of Aeronautics and Astronautics (AeroAstro) and a principal investigator in the MIT Laboratory for Information and Decision Systems (LIDS).

Fan is joined on the paper by lead author Anjali Parashar, a mechanical engineering graduate student; Yingke Li, an AeroAstro postdoc; and others at MIT and Saab. The research will be presented at the International Conference on Learning Representations.

Evaluating ethics

In a large system like a power grid, evaluating the ethical alignment of an AI model’s recommendations in a way that considers all objectives is especially difficult.

Most testing frameworks rely on pre-collected data, but labeled data on subjective ethical criteria are often hard to come by. In addition, because ethical values and AI systems are both constantly evolving, static evaluation methods based on written codes or regulatory documents require frequent updates.

Fan and her team approached this problem from a different perspective. Drawing on their prior work evaluating robotic systems, they developed an experimental design framework to identify the most informative scenarios, which human stakeholders would then evaluate more closely.

Their two-part system, called Scalable Experimental Design for System-level Ethical Testing (SEED-SET), incorporates quantitative metrics and ethical criteria. It can identify scenarios that effectively meet measurable requirements and align well with human values, and vice versa.

“We don’t want to spend all our resources on random evaluations. So, it is very important to guide the framework toward the test cases we care the most about,” Li says.

Importantly, SEED-SET does not need pre-existing evaluation data, and it adapts to multiple objectives.

For instance, a power grid may have several user groups, including a large rural community and a data center. While both groups may want low-cost and reliable power, each group’s priority from an ethical perspective may vary widely.

These ethical criteria may not be well-specified, so they can’t be measured analytically.

The power grid operator wants to find the most cost-effective strategy that best meets the subjective ethical preferences of all stakeholders.

SEED-SET tackles this challenge by splitting the problem into two, following a hierarchical structure. An objective model considers how the system performs on tangible metrics like cost. Then a subjective model that considers stakeholder judgements, like perceived fairness, builds on the objective evaluation.

“The objective part of our approach is tied to the AI system, while the subjective part is tied to the users who are evaluating it. By decomposing the preferences in a hierarchical fashion, we can generate the desired scenarios with fewer evaluations,” Parashar says.

Encoding subjectivity

To perform the subjective assessment, the system uses an LLM as a proxy for human evaluators. The researchers encode the preferences of each user group into a natural language prompt for the model.

The LLM uses these instructions to compare two scenarios, selecting the preferred design based on the ethical criteria.

“After seeing hundreds or thousands of scenarios, a human evaluator can suffer from fatigue and become inconsistent in their evaluations, so we use an LLM-based strategy instead,” Parashar explains.

SEED-SET uses the selected scenario to simulate the overall system (in this case, a power distribution strategy). These simulation results guide its search for the next best candidate scenario to test.

In the end, SEED-SET intelligently selects the most representative scenarios that either meet or are not aligned with objective metrics and ethical criteria. In this way, users can analyze the performance of the AI system and adjust its strategy.

For instance, SEED-SET can pinpoint cases of power distribution that prioritize higher-income areas during periods of peak demand, leaving underprivileged neighborhoods more prone to outages.

To test SEED-SET, the researchers evaluated realistic autonomous systems, like an AI-driven power grid and an urban traffic routing system. They measured how well the generated scenarios aligned with ethical criteria.

The system generated more than twice as many optimal test cases as the baseline strategies in the same amount of time, while uncovering many scenarios other approaches overlooked.

“As we shifted the user preferences, the set of scenarios SEED-SET generated changed drastically. This tells us the evaluation strategy responds well to the preferences of the user,” Parashar says.

To measure how useful SEED-SET would be in practice, the researchers will need to conduct a user study to see if the scenarios it generates help with real decision-making.

In addition to running such a study, the researchers plan to explore the use of more efficient models that can scale up to larger problems with more criteria, such as evaluating LLM decision-making.

###

This research was funded, in part, by the U.S. Defense Advanced Research Projects Agency.