MISES

By Ryan McMaken

The US Treasury Department last week released its monthly report on federal spending and revenue. March spending for the US federal government was up by more than $20 billion, or by nearly four percent, from March of last year. In spite of the administration’s claims last year that Trump would implement hundreds of billions in spending cuts, the US federal government is now spending at higher levels than anything seen since 2021 when covid-related spending surged above all previous historical peacetime levels.

Meanwhile, the US is on track to spend more than a trillion dollars on interest on the debt this year, and this number will only go up as interest rates on federal Treasurys look poised to rise further. Moreover, recent numbers reflect only a small amount of the true war costs coming out of the war with Iran. The full brunt of the runaway spending that will come out of this war have yet to be felt. Indeed, the war with Iran, with no end in sight, is now estimated to be on track to cost more than a trillion dollars above and beyond the current $900 billion pentagon budget, going into 2027. If current trends continue, federal spending in 2027’s fiscal year will make 2026 spending look mild by comparison.

Federal Spending in 2026 Fiscal Year

According to the Treasury’s report, the US federal government spent $548 billion during March, an increase of $20.7 billion, or 3.9 percent over March of 2025. The total federal deficit for the month was up as well, with a total March deficit of $164.1 billion. That’s up from March 2025’s deficit of $160.5 billion.

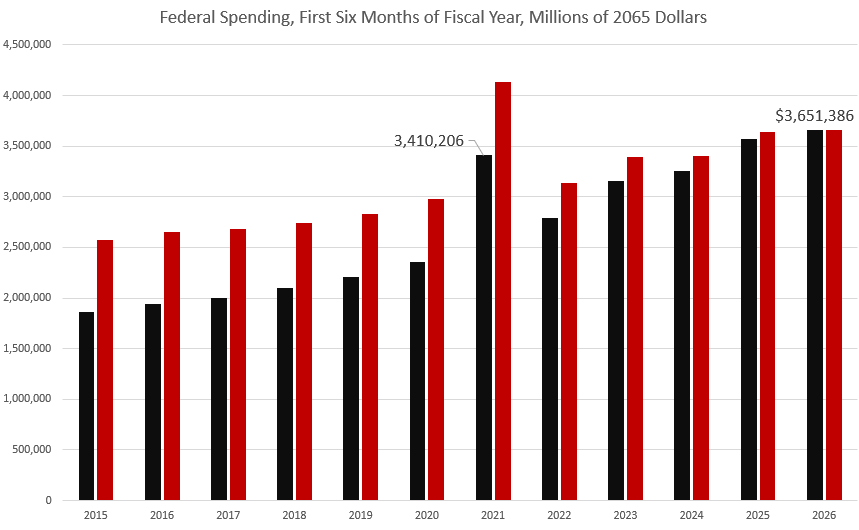

Looking at the first six months of the current fiscal year combined—a period which began on October 1, 2025—federal spending was up by $83.9 billion, or 2.3 percent. At $3.6 trillion for the fiscal year so far, that’s the highest level of spending ever, and is now even higher than spending during the days of juiced-up covid-panic outlays. Even when adjusted for inflation, 2026’s spending for the first six months of this fiscal year is at the highest level for every year except for 2021. In other words, the only other year with higher spending was the year when Trump ratcheted up spending toward the end of the 2020 calendar year—which was an election year. Trump continued to push historically high spending levels for the first four months of the 2021 fiscal year, until he finally left office at the end of January 2021.

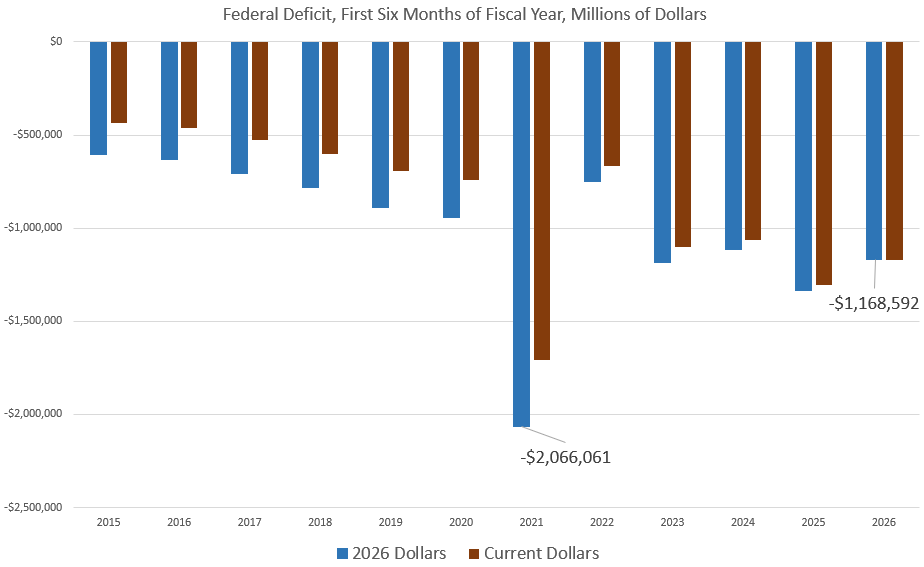

For the first six months of this fiscal year, the total deficit grew to the second-largest total since 2021, climbing to $1.17 trillion. Last year, the deficit for the first half of the year was $1.31 trillion. Adjusted for CPI inflation, the deficit during the first six months of this fiscal year came in behind 2021, 2023, and 2025. This year, however, as war expenses climb, and as Treasury yields rise, US federal spending is on track to top two trillion for the first time since 2021.

War Costs Mount

It is likely that the US had already spent at least $16 billion on the Iran war in just the first three to five days. Overall, estimates suggest the war costs the US taxpayer approximately $2 billion per day. Most official estimates of the war’s cost greatly underestimate the total because official estimates are usually based on the original costs for munitions and ships. Replacement costs are likely to be much higher than the cost of the initial production. Moreover, official costs in recent decades have reliably underestimated the total price tags for wars.

For example, during the Iraq war, when George W. Bush’s economic advisor, Larry Lindsey, suggested the war might cost as much as $200 billion, he was fired. Defense Secretary Donald Rumsfeld said that number was “baloney.” The true cost of the war ended up being over $5 trillion. In other words, US government estimates of war costs are not to be taken seriously.

In any case, at current spending levels, the Iran war will likely cost US taxpayers at least $100 billion by early May. The war will greatly add to the total deficit by the end of the current fiscal year, and this will further add to the federal debt, which, as of the end of March, stood at $39.1 trillion. Since Trump was sworn in for his second term 14 months ago, the total federal debt has increased by $2.9 trillion Indeed, if we combine the total debt accumulated during Trump’s first term and his second term, so far, the US has added more than $10.4 trillion, or more than 26 percent of the entire national debt.

Interest Payments on the Federal Debt

With debt levels rising rapidly, and with Treasury yields no longer falling, interest payments on the national debt have become a substantial part of federal outlays. In March, for example, the US paid more than $102 billion, just for interest on the debt. For the first six months of this fiscal year, the total has been more than $622.5 billion. Nearly one dollar for every dollar spent now goes to interest on the debt. The Treasury department estimates that the total paid out in interest by the end of this year will be $1.3 trillion.

That number is likely to increase going forward, since there is good reason to believe that yields on Treasurys will go up, and this will drive up overall debt service costs as the US must refinance trillions of its debt at higher interest rates. During 2026, more than $10 trillion of US debt will mature. Naturally, the US does not have the money to pay off these Treasurys, so the US will have to refinance these costs with new bond issuance. Yet, Treasury yields are higher now than they were when most Treasurys were initially issued, so this will further increase the cost of the debt. The ten-year yield, for example, has been near a nineteen-year high for the past six months.

Rising yields are partly due to the fact that rising deficits mean the US Treasury must continue to issue hundreds of billions in new Treasurys every few months. As the market is flooded with these Treasurys, prices go down, which means yields go up. Recent Treasury auctions have indeed hinted that demand for Treasurys is weak. On March 28, Fortune reported:

President Donald Trump’s war on Iran is colliding with U.S. debt investors, who demonstrated less appetite for Treasury securities as hopes for a quick end to the conflict evaporate …The short end of the yield curve is under extra pressure as soaring oil prices boost the inflation outlook and put additional rate cuts from the Federal Reserve on hold, with odds of a rate hike also increasing.

(The odds of a rate hike are rising because price inflation remains stubbornly near three percent, well above the Fed’s two-percent target. This is all in spite of the Fed’s repeated assurances in recent years that price inflation was headed rapidly toward the target level.)

Rising war costs, coupled with growing debt levels, will further encourage volatility:

The U.S. Treasury market has entered a period of intense volatility this April, marked by a dramatic steepening of the yield curve that has caught many institutional investors off guard. For the first time in over two years, the benchmark 10-year Treasury yield has surged past the 4.30% threshold, reaching as high as 4.39% in mid-April 2026. This shift signals a fundamental “re-pricing” of long-term risk as the market grapples with a resurgent inflation narrative and a geopolitical energy shock that has dismantled hopes for a “soft landing” in the second half of the year.

Thanks to rising debts, deficits, and yields, we can expect the central bank to intervene to monetize the debt and bring down yields. In fact, the Fed has been intervening to do exactly this since December when the Fed announced that it would begin buying up $40 billion of Treasurys per month. Since December, the Fed has added $163 billion to its portfolio, meaning the Fed has essentially created 163 billion new dollars in an effort to reduce Treasury yields. This will fuel more price inflation—either in assets or in consumer goods. This all comes in spite of years of Fed promises to eliminate the Fed’s stockpile of Treasurys which peaked at $5.7 trillion in 2022. The persistence of this easy-money financed purchasing of Treasurys has been a major factor in the 40-year highs in price inflation reached in 2022, and has reduced American’s purchasing power by nearly 25 percent since 2020.

We’ve come a long way from early 2025 when the Trump administration was claiming that it would slash federal spending with cuts through the so-called “Department of Government Efficiency” (DOGE). That was clearly an immense failure, with no more than $33 billion in confirmed cuts to federal spending. That’s far less than a single month’s payment on the federal debt, and less than a single month’s cost of carrying on the Iran War.

At the same time it was pretending to cut federal spending, the Trump administration was claiming that it would cut spending so much that it could eliminate the federal income tax and rely on import taxes instead. Anyone who knew anything about US fiscal policy knew that was absurd, of course. Taxes on imports, even with recent growth in revenue, amount to a small percentage of the federal government’s immense receipts total. But many Trump supporters bought the idea. In the real world, however, there will be no spending cuts and the income tax is certainly not in danger. Instead of that, the Trump administration has subjected to the American taxpayers to even more federal spending and higher interest rates fueled by larger debts.

Source: This article was published by the Mises Institute

The Mises Institute, founded in 1982, teaches the scholarship of Austrian economics, freedom, and peace. The liberal intellectual tradition of Ludwig von Mises (1881-1973) and Murray N. Rothbard (1926-1995) guides us. Accordingly, the Mises Institute seeks a profound and radical shift in the intellectual climate: away from statism and toward a private property order. The Mises Institute encourages critical historical research, and stands against political correctness.

.JPG)

.jpeg)

.jpg)